In June 2024, a 22-year-old ex-OpenAI researcher published a 165-page essay predicting AGI by 2027, an $8 trillion annual AI capex curve, and the US government nationalizing the frontier labs. Two years and trillions of dollars of investment later — how much of it actually happened?

The Document That's Quietly Steering Trillion-Dollar Bets

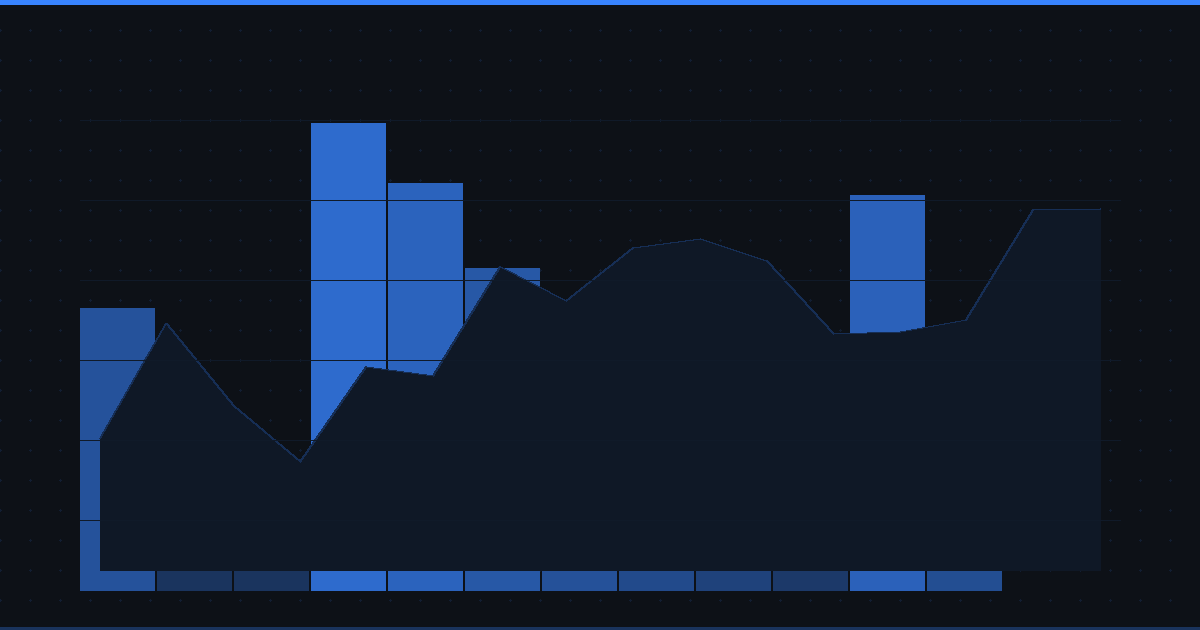

If you've been watching NVIDIA hit $4T, the $500 billion Stargate announcement, hyperscaler capex push past $600B, and the entire US power grid suddenly being a "constraint on AI" — you've been watching something that looks remarkably like the curve Leopold Aschenbrenner drew in June 2024.

His essay, "Situational Awareness: The Decade Ahead", is one of the most-cited and most-imitated AI forecasts of the past two years. It's been read inside the White House, on every frontier lab leadership team, and across the trading floors of every fund with a meaningful AI book.

It's also load-bearing for some very specific market bets:

- The "we need a $1T cluster" thesis driving NVIDIA, Broadcom, TSMC, and the entire AI hardware stack

- The "power is the bottleneck" thesis driving utility deregulation plays, nuclear restarts, and gas-turbine orders

- The "China is going to steal it" thesis driving export controls and lab security spending

- The "AGI by 2027" thesis driving datacenter REITs, hyperscaler capex, and the AI labs' fundraising rounds

So whether the essay holds up isn't an academic question. It's directly relevant to a portfolio.

This post is the two-year report card:

- The background — what Aschenbrenner actually said, in plain English

- What came true — the predictions that aged surprisingly well

- What didn't (yet) — the predictions that are slipping or wrong

- The risks — what could break his entire forecast, and what to watch as a trader

Part 1: The Background

Who Is Leopold Aschenbrenner?

Aschenbrenner was a researcher on OpenAI's Superalignment team. He was fired in April 2024 after raising security concerns. Two months later, he published "Situational Awareness" and shortly after launched an investment firm of the same name, backed by Patrick and John Collison (Stripe), Daniel Gross, and Nat Friedman, betting directly on his thesis.

That last detail matters. He's not a pundit. He's running money against his own forecast.

The Core Thesis in One Paragraph

Compute is scaling at ~0.5 orders of magnitude per year, algorithmic efficiency is scaling at another ~0.5 OOMs per year, and "unhobbling" (reasoning, agents, tool use) adds yet another ~0.5 OOMs. Stack those together and you get GPT-2 to GPT-4-sized capability jumps every 4 years. By 2027, that puts AI at "drop-in PhD-level remote worker" capability. Once that happens, you can run millions of automated AI researchers in parallel, compress a decade of algorithmic progress into a single year, and arrive at superintelligence by 2028-2029. Whoever gets there first wins the century. Therefore the US government will inevitably nationalize the effort by 2027-2028 into a Manhattan-style "Project."

The Five Big Predictions

| # | Prediction | Aschenbrenner's Timeline |

|---|---|---|

| 1 | AGI ("drop-in remote worker") | End of 2027 |

| 2 | Intelligence explosion → superintelligence | 2028-2029 |

| 3 | Trillion-dollar single training cluster | 2030 |

| 4 | $8T/year industry-wide AI capex | 2030 |

| 5 | USG forms "The Project" — labs merge into national consortium | 2027-2028 |

Two years in, with ~30 months left until his hard 2027 deadline — let's grade.

Part 2: What Came True

This is the part of the essay that aged best. It's not close.

✅ Compute Scaling Tracked His Curve

Aschenbrenner predicted ~1 million H100-equivalent training clusters by 2026. As of early 2026, multiple announced or operational mega-clusters land in that range:

- xAI's Colossus scaled from 100K to 200K H100s in 2024-2025, with a roadmap to 1M+

- Stargate (OpenAI + SoftBank + Oracle + MGX) committed $500B over four years, with the Abilene, Texas site built for multi-gigawatt scale

- Anthropic's Project Rainier with Amazon committed to 1M+ Trainium2 chips

- Meta's Hyperion datacenter targeting 5GW

His "1 GW per cluster by 2026" call: hit. His "10 GW by 2028" call: visibly under construction.

Investor takeaway: this is why power-adjacent stocks (VST, CEG, TLN), HVAC/cooling (VRT), grid equipment (ETN, GEV), and the broader "shovels for the data center build-out" trade has worked. The capex curve is real.

✅ The "Unhobbling" Bet Aged Beautifully

Of all his predictions, this is the most prescient. In June 2024 — three months before OpenAI launched o1 — Aschenbrenner specifically called out "test-time compute overhang" as a major OOM source. He argued that unlocking longer chains of reasoning at inference time could be worth multiple orders of magnitude.

Then o1 shipped in September 2024. Then o3 in late 2024/early 2025. Then DeepSeek R1 in January 2026. Then Claude's extended thinking. Then Gemini's thinking modes. The entire frontier shifted to reasoning-via-RL as the dominant scaling axis.

He called the paradigm shift before it had a name.

✅ Algorithmic Efficiency Curve Held (and Then Some)

Aschenbrenner projected ~0.5 OOM/year of algorithmic efficiency. DeepSeek V3 and R1 — trained at a small fraction of frontier-lab budgets — validated this curve hard. Inference costs across the industry dropped roughly 10x in 2025 alone. Open-weight models compete with closed-weight frontier models within a few months of release.

✅ China as a Real Competitor

When Aschenbrenner wrote the essay, the consensus was "China is 18-24 months behind, and chip controls will keep them there." DeepSeek's January 2026 R1 release and the briefly #1 ranking on the App Store made his "China is a serious competitor" framing look conservative, not alarmist.

His specific framing — algorithmic secrets matter as much as raw compute — was vindicated by an outcome he didn't quite predict: not stolen secrets, but independent algorithmic innovation under chip constraints.

✅ Capability Trajectory on Benchmarks

Frontier models in 2026 saturate most academic benchmarks, hit PhD-qualifying-exam-level performance on physics, chemistry, and math, and have made METR's autonomous-task-length curve double on a roughly 4-7 month cadence. If that holds another 18 months, we hit human-day-scale autonomous tasks by mid-2027 — which is essentially what he predicted.

Part 3: What Didn't Come True (Yet)

This is where the report card gets bumpier.

❌ "The Project" Hasn't Materialized

Aschenbrenner predicted that by 2027-2028, the US government would orchestrate a Manhattan-style consortium where the frontier labs voluntarily merge under federal coordination, with Congress appropriating trillions and security clearances mandatory for researchers.

What actually happened:

- Stargate is a private-sector partnership with public political support, not a federal program

- Export controls tightened multiple times — but no nationalization

- Frontier labs are still independent. OpenAI, Anthropic, Google DeepMind, xAI, Meta — still competing, not consolidated

- Lab security improved but is nowhere near the SCIF-grade infrastructure he argued was urgent

His sociological prediction is running visibly behind his technical prediction. Which is the classic forecaster failure mode — overestimating how fast institutions move, underestimating how long technical trends compound.

❌ "Drop-In Remote Worker" Is Still Aspirational

Frontier models can now write production-quality code, perform multi-hour autonomous tasks under supervision, and crush academic benchmarks. They cannot yet replace a junior knowledge worker for a full week of fuzzy, context-dependent work without hand-holding.

The gap is about reliability, not raw intelligence. Andrej Karpathy has called it "the 9s of reliability problem" — going from 95% reliable to 99.9% reliable on long-horizon tasks may be a different scaling regime than going from 80% to 95%.

This matters for the 2027 AGI call. If reliability scales sub-linearly with capability, the "drop-in worker" milestone slips by years even as benchmark scores keep climbing.

❌ The Intelligence Explosion Hasn't Started

Aschenbrenner's most consequential claim was that once you hit AGI, you can run 100 million automated AI researchers at 10-100x human speed and compress a decade of algorithmic progress into a single year.

As of mid-2026: AI is meaningfully accelerating coding (look at Claude Code, Cursor, Devin, GitHub Copilot's agentic mode). It's helping with some research tasks. It's not running the labs.

His 2028-2029 explosion timeline is still possible but requires the AGI step to happen first, on schedule, plus the explosion to not bottleneck on compute, taste, or experimental throughput. That's a lot of conditional probability multiplying in the wrong direction.

❌ "Compute Is Destiny" — DeepSeek Complicated This

His Project chapter rests on the assumption that whoever has the trillion-dollar cluster wins. DeepSeek showed that a chip-constrained team with strong engineering can land in striking distance of frontier capability for a fraction of the cost.

If algorithmic efficiency continues outrunning hardware scaling — and there's reason to think it will — then "the trillion-dollar cluster" becomes less of a moat than he priced in. Which is good news for AI capability broadly, bad news for the narrative that justifies hyperscaler capex returning a normal cost-of-capital.

Part 4: The Risks to His Entire Forecast

This is the section that matters most for investors. There are six ways the whole framework could break, in roughly increasing order of severity.

Risk 1: Reliability Doesn't Scale With Capability

If the gap between "passes the benchmark" and "reliably does the job" turns out to be 2-4 OOMs of additional effective compute, AGI slips from 2027 to 2030+ — and the entire intelligence-explosion timeline slides with it. The capex justifications start looking very different if the ROI horizon stretches by 3-5 years.

What to watch: METR's task-length curve, real-world enterprise AI deployment numbers (not pilots), AI's actual share of revenue at frontier labs vs. their training spend.

Risk 2: Algorithmic Efficiency Eats the Compute Moat

If DeepSeek-style efficiency gains keep compounding, the trillion-dollar single-cluster argument evaporates. You don't need $1T for AGI; you need $50B and great engineering. Hyperscalers built infrastructure for a moat that may not exist.

What to watch: training cost of each frontier model release, open-weight performance vs. closed-weight (the gap should widen if scale matters; it's been narrowing), Chinese model performance under US export controls.

Risk 3: The Data Wall Bites Harder Than He Modeled

Aschenbrenner waved off the data wall, citing "all the labs are working on it" and Dario Amodei's confidence. The bear case: synthetic data and RL on verifiable rewards work great for math and code (verifiable domains) but don't generalize to fuzzy tasks (research taste, design, project management) — which are exactly what "drop-in remote worker" requires.

If that's true, we get narrow superhuman AI in verifiable domains by 2027 — which is huge — but not general AGI. The market reaction would be: AI is a productivity tool (good but not paradigm-shifting), not a labor replacement (paradigm-shifting). Very different multiples.

What to watch: AI performance on tasks without clear ground truth (creative writing quality assessments, research originality, long-horizon project completion).

Risk 4: "The Project" Failure Mode

If the US government doesn't nationalize the labs, three things happen that break his strategic story:

- Lab security stays at "level 0" → algorithmic secrets keep leaking → US lead compresses

- No coordination on safety during the explosion → race-to-the-bottom dynamics

- No nonproliferation regime → multiple superintelligences instead of one decisive lead

Aschenbrenner's whole geopolitical chapter assumes the USG mobilizes. If it doesn't — and current trajectory suggests it won't on his timeline — then we're in a multi-polar AGI world, which is the scenario he says is most dangerous.

What to watch: any move toward formal USG coordination (procurement, security clearance mandates, federal AI infrastructure), and the political response to the next major AI capability surprise.

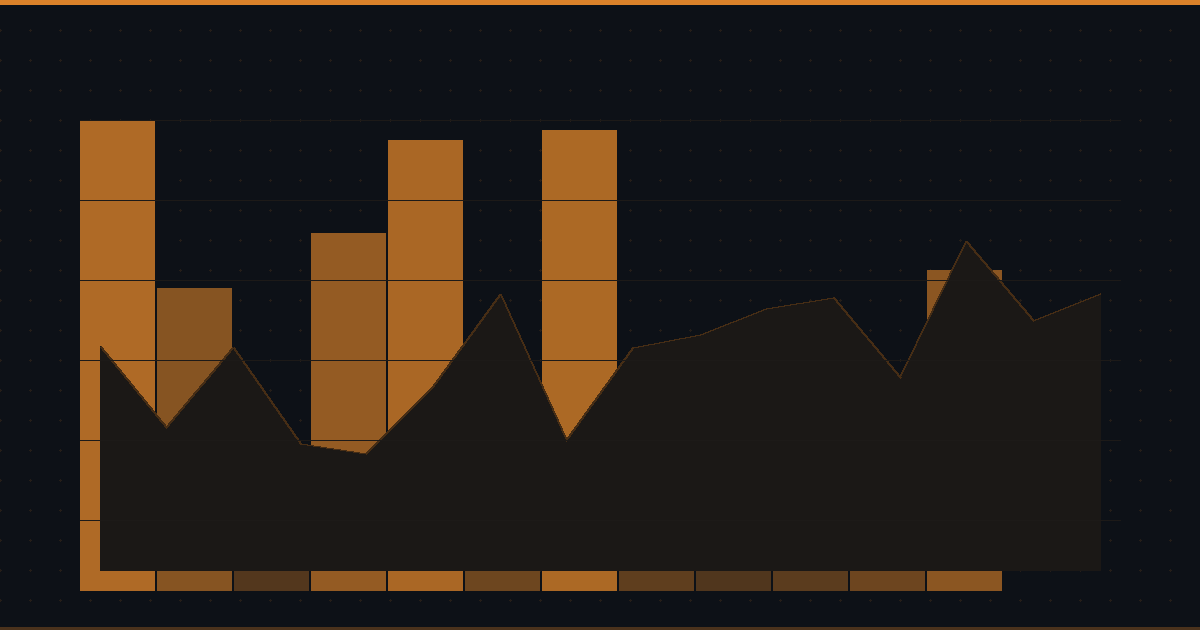

Risk 5: Capex Outruns Revenue

His curve says $8T/year of AI capex by 2030. Industry revenue from AI in 2025 was, generously, $50-100B. The bull case is that the capex precedes revenue and the J-curve eventually closes. The bear case is that we're building cathedrals for a religion that doesn't have enough congregants yet.

If meaningful revenue doesn't materialize by 2027, capex contracts hard and the entire infrastructure stack — chips, power, datacenter — re-rates. This is the bubble case and it's not crazy.

What to watch: AI revenue at frontier labs (OpenAI's annualized revenue trajectory, enterprise contract sizes), hyperscaler ROI on AI capex (it has to start showing up), CFO commentary on whether AI capex is being justified by current revenue or future expectation.

Risk 6: He's Right About the Capability — Wrong About Who Captures the Value

Even if AGI arrives on his timeline, the value capture story is unclear. If algorithmic efficiency keeps improving, the marginal capability comes from open-weight models or rapid-follower companies — not the original developers. NVIDIA might capture it. The labs themselves might not.

This is the most uncomfortable risk because it can be true while everything else in the essay is also true. AGI happens, the world transforms, and the trade that worked in 2024-2026 doesn't work in 2027-2030 because the value migrates downstream.

What to watch: gross margin trends at frontier labs, time-from-frontier-release to open-weight match (it's been compressing), where AI revenue actually accrues in the value chain.

Two-Year Report Card

| Prediction | Status | Notes |

|---|---|---|

| Compute scaling curve (~0.5 OOM/yr) | ✅ On track | 1M-class clusters operational or under construction |

| Power buildout (~1 GW per cluster by 2026) | ✅ Hit | Stargate/Hyperion-class sites under construction |

| Test-time compute as major OOM source | ✅ Hit ahead of schedule | o1, o3, R1, extended thinking — entire paradigm shifted |

| Algorithmic efficiency (~0.5 OOM/yr) | ✅ Hit, possibly ahead | DeepSeek demonstrated more headroom than priced in |

| China as competitor | ✅ Vindicated | DeepSeek validated the framing |

| Benchmark saturation (PhD-level) | ✅ Hit | Most academic benchmarks saturated |

| AGI ("drop-in worker") by 2027 | ⚠️ Possible but reliability gap looks bigger than he priced | 18 months to test |

| Intelligence explosion 2028-2029 | ⚠️ Speculative — depends on AGI hitting first | Auto-research is real but small-scale |

| Trillion-dollar clusters by 2030 | ✅ On capex trajectory | Stargate $500B/4yr is on the curve |

| "The Project" by 2027-2028 | ❌ Behind schedule, possibly never | Direction is consistent, magnitude isn't |

| Lab security overhaul | ❌ Not happening | Labs still at startup-grade security |

Net read: His technical predictions aged better than his sociological predictions. The capability curve is roughly on track. The institutional response is far behind what he expected. This is the standard failure mode for technically-strong forecasters — accurate on the science, optimistic on diffusion and politics.

What This Means for Investors

Three actionable conclusions:

1. The infrastructure trade has worked because the OOM curve is real. Power, cooling, networking, datacenter, chip fabrication — these aren't bubble names if Aschenbrenner's compute curve continues. They are bubble names if the J-curve to AI revenue doesn't close by 2027-2028. Track AI revenue conversion as the leading indicator.

2. The "winner take all" framing is wrong. DeepSeek broke the moat thesis. Algorithmic efficiency is compounding faster than he modeled. The trillion-dollar cluster is necessary but not sufficient. The 2027-2030 trade is probably more about who captures the productivity gains than who has the biggest cluster. That's a downstream/applications trade, not just an upstream/infrastructure trade.

3. Watch the reliability curve, not just the capability curve. Benchmark saturation is what gets headlines. Multi-day autonomous task reliability is what justifies AGI capex. They are not the same metric. If the second one slips, his entire 2027-2029 timeline does too — and the capex story re-rates.

The full essay is free to read at situational-awareness.ai. Whether you agree with him or not, it's the most-cited document on AI futures of the past two years, and the people building the world he describes — the labs, the hyperscalers, and the funds — are reading it carefully.

You should too.

Want to track the AI infrastructure trade in real-time? Stock Alarm Pro's screener and intelligence reports cover the entire AI capex stack — from frontier labs to hyperscalers to power, cooling, and chip equipment. Set alerts on the names where the thesis lives or dies.